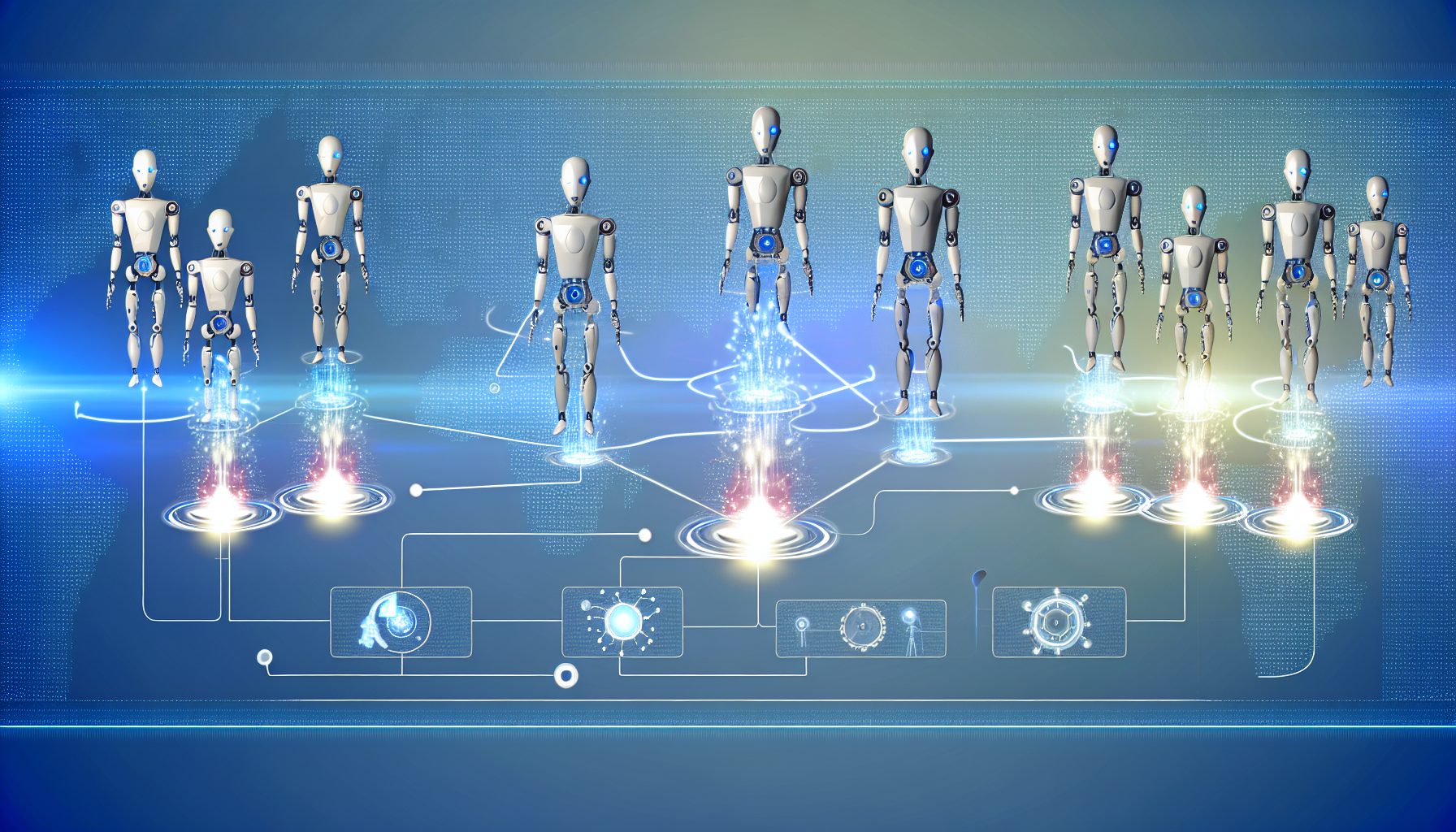

From Solo Bots to Agent Orchestration: Building Real-World Multi-Agent AI Workflows with LLM Agents and Prompt Pipelines

Transitioning from isolated AI bots to orchestrated multi-agent workflows can significantly enhance operational capabilities and service quality. This article explores how businesses can successfully implement multi-agent AI systems using LLM (Large Language Model) agents and prompt pipelines.

Estimated Reading Time: 7 minutes.

- Leveraging LLM agents for improved communication.

- Understanding the challenges of multi-agent systems.

- Implementing effective agent orchestrations.

- Real-world applications in customer support and other sectors.

- Optimizing performance through robust frameworks.

Table of Contents

- Context and Challenges

- Solution / Approach

- Concrete Example / Case Study

- FAQ

- Authority References

- Conclusion

Context and Challenges

Multi-agent AI systems enable multiple intelligent agents to collaborate and communicate with each other to achieve specific goals. These agents can perform a variety of tasks, from data collection to decision-making. However, implementing such systems presents several challenges:

- Complex Coordination: As the number of agents increases, coordinating actions can lead to communication overhead and synchronization challenges.

- Scalability: Efficiently scaling a multi-agent system without compromising performance is vital; solutions that work for a small number of agents may falter with hundreds.

- Error Handling: The likelihood of miscommunication or errors rises, demanding robust error-checking mechanisms.

- Integration with Existing Systems: Many organizations have established systems that must incorporate new multi-agent capabilities without disrupting existing workflows.

Understanding these challenges is crucial for devising effective solutions and strategies necessary for building such workflows. Key concepts include agent behavior, collaboration mechanisms, and the architecture of prompt pipelines.

Solution / Approach

The solution lies in strategically utilizing LLM agents and orchestration methods. LLM agents are capable of processing and generating human-like text, making them invaluable for real-time interactions within a multi-agent framework. Employing prompt pipelines allows for guided interactions, ensuring consistent and coherent communication among agents.

A robust architecture for an LLM-based multi-agent system typically consists of three primary layers:

- Input Layer: Responsible for collecting data inputs from various sources, including APIs, user interfaces, or databases.

- Processing Layer: Here, agents work collaboratively, applying their natural language processing capabilities to analyze data, assess scenarios, and provide recommendations.

- Output Layer: This layer communicates results to users or other systems, potentially generating reports, action items, or even orchestrated responses.

Businesses can stay updated on innovations in AI agents and automation by referring to resources like Agent AI News. These insights help organizations navigate the evolving landscape of multi-agent orchestration.

Concrete Example / Case Study

Consider a customer support scenario where a business implements multiple AI agents to manage queries. Traditionally, a single bot might direct customers based on keyword recognition. A multi-agent system, however, can employ several specialized agents:

- Information Retrieval Agent: Retrieves relevant knowledge base articles to address common inquiries.

- Escalation Agent: If an issue remains unresolved, this agent escalates the case to a human representative.

- Feedback Agent: After resolution, this agent solicits feedback to enhance future interactions.

In action, the process begins when a user submits a query. The Information Retrieval Agent analyzes the input and responds with relevant information. If the customer has further issues, the Escalation Agent intervenes, notifying a human agent, while the Feedback Agent engages the customer post-resolution for insights. This seamless interaction enhances customer satisfaction and eases the workload on human agents.

FAQ

1. What are the main advantages of multi-agent systems?

Multi-agent systems enable parallel processing, thereby significantly enhancing efficiency. They also provide robust problem-solving strategies through collaboration and specialization.

2. How do I measure the effectiveness of my multi-agent system?

The effectiveness of a multi-agent system can be gauged through metrics such as response times, resolution rates, and customer satisfaction scores. Regular analysis of these metrics helps identify areas for improvement.

3. What are some common pitfalls in building multi-agent workflows?

Common pitfalls include inadequate communication protocols between agents, unclear objectives for each agent, and a failure to iteratively test and refine workflows.

Authority References

For a deeper understanding of multi-agent AI systems and LLMs, consider reviewing resources from:

- Association for the Advancement of Artificial Intelligence

- Research papers on multi-agent systems

- Multi-Agent Systems: A Modern Approach

Conclusion

Transitioning from solo bots to orchestrated multi-agent workflows is a strategic advancement that can dramatically improve operational efficiency. By leveraging LLM agents within well-defined prompt pipelines, organizations can navigate the complexities of multi-agent coordination, streamline processes, and enhance outcomes. As AI technology continues to evolve, embracing these systems provides businesses with a competitive edge in efficiency and service quality.

Leave a Reply